Blog

- Holding Hands With a Loved One Synchronized Heart Rate And Brainwaves, Decreases Pain

- By Jason von Stietz, Ph.D.

- March 25, 2018

-

Photo Credit: Picture Explore Why does holding hands with a loved one seem to comfort us? Researchers at the University of Colorado, Boulder and the University of Haifa examined the relationship between holding hands with a spouse or partner and the experience of physical pain. Findings showed that when couples held hands their brainwaves and respiration synchronized and their experience of pain from a mild burn decreased. The study was discussed in a recent article in Neuroscience News:

“We have developed a lot of ways to communicate in the modern world and we have fewer physical interactions,” said lead author Pavel Goldstein, a postdoctoral pain researcher in the Cognitive and Affective Neuroscience Lab at CU Boulder. “This paper illustrates the power and importance of human touch.”

The study is the latest in a growing body of research exploring a phenomenon known as “interpersonal synchronization,” in which people physiologically mirror the people they are with. It is the first to look at brain wave synchronization in the context of pain, and offers new insight into the role brain-to-brain coupling may play in touch-induced analgesia, or healing touch.

Goldstein came up with the experiment after, during the delivery of his daughter, he discovered that when he held his wife’s hand, it eased her pain.

“I wanted to test it out in the lab: Can one really decrease pain with touch, and if so, how?”

He and his colleagues at University of Haifa recruited 22 heterosexual couples, age 23 to 32 who had been together for at least one year and put them through several two-minute scenarios as electroencephalography (EEG) caps measured their brainwave activity. The scenarios included sitting together not touching; sitting together holding hands; and sitting in separate rooms. Then they repeated the scenarios as the woman was subjected to mild heat pain on her arm.

Merely being in each other’s presence, with or without touch, was associated with some brain wave synchronicity in the alpha mu band, a wavelength associated with focused attention. If they held hands while she was in pain, the coupling increased the most.

Researchers also found that when she was in pain and he couldn’t touch her, the coupling of their brain waves diminished. This matched the findings from a previously published paper from the same experiment which found that heart rate and respiratory synchronization disappeared when the male study participant couldn’t hold her hand to ease her pain.

“It appears that pain totally interrupts this interpersonal synchronization between couples and touch brings it back,” says Goldstein.

Subsequent tests of the male partner’s level of empathy revealed that the more empathetic he was to her pain the more their brain activity synced. The more synchronized their brains, the more her pain subsided.

How exactly could coupling of brain activity with an empathetic partner kill pain?

More studies are needed to find out, stressed Goldstein. But he and his co-authors offer a few possible explanations. Empathetic touch can make a person feel understood, which in turn – according to previous studies – could activate pain-killing reward mechanisms in the brain.

“Interpersonal touch may blur the borders between self and other,” the researchers wrote.

The study did not explore whether the same effect would occur with same-sex couples, or what happens in other kinds of relationships. The takeaway for now, Pavel said: Don’t underestimate the power of a hand-hold.

“You may express empathy for a partner’s pain, but without touch it may not be fully communicated,” he said.

Read the original article Here

- Comments (0)

- Key Difference in the Brains of Super-Agers

- By Jason von Stietz, M.A.

- March 9, 2018

-

Getty Images Why do some people in their 90’s seem mentally sharp when others do not? Researchers at Northwestern University examined the brains and cognitive abilities of “super-agers.” Findings from autopsies showed that the brains of super-agers had a significantly thicker cortex than those of their average peers. The study was discussed in a recent article in MedicalXpress:

It's pretty extraordinary for people in their 80s and 90s to keep the same sharp memory as someone several decades younger, and now scientists are peeking into the brains of these "superagers" to uncover their secret.

The work is the flip side of the disappointing hunt for new drugs to fight or prevent Alzheimer's disease.

Instead, "why don't we figure out what it is we might need to do to maximize our memory?" said neuroscientist Emily Rogalski, who leads the SuperAging study at Northwestern University in Chicago.

Parts of the brain shrink with age, one of the reasons why most people experience a gradual slowing of at least some types of memory late in life, even if they avoid diseases like Alzheimer's.

But it turns out that superagers' brains aren't shrinking nearly as fast as their peers'. And autopsies of the first superagers to die during the study show they harbor a lot more of a special kind of nerve cell in a deep brain region that's important for attention, Rogalski told a recent meeting of the American Association for the Advancement of Science.

These elite elders are "more than just an oddity or a rarity," said neuroscientist Molly Wagster of the National Institute on Aging, which helps fund the research. "There's the potential for learning an enormous amount and applying it to the rest of us, and even to those who may be on a trajectory for some type of neurodegenerative disease."

What does it take to be a superager? A youthful brain in the body of someone 80 or older. Rogalski's team has given a battery of tests to more than 1,000 people who thought they'd qualify, and only about 5 percent pass. The key memory challenge: Listen to 15 unrelated words, and a half-hour later recall at least nine. That's the norm for 50-year-olds, but the average 80-year-old recalls five. Some superagers remember them all.

"It doesn't mean you're any smarter," stressed superager William "Bill" Gurolnick, who turns 87 next month and joined the study two years ago.

Nor can he credit protective genes: Gurolnick's father developed Alzheimer's in his 50s. He thinks his own stellar memory is bolstered by keeping busy. He bikes, and plays tennis and water volleyball. He stays social through regular lunches and meetings with a men's group he co-founded.

"Absolutely that's a critical factor about keeping your wits about you," exclaimed Gurolnick, fresh off his monthly gin game.

Rogalski's superagers tend to be extroverts and report strong social networks, but otherwise they come from all walks of life, making it hard to find a common trait for brain health. Some went to college, some didn't. Some have high IQs, some are average. She's studied people who've experienced enormous trauma, including a Holocaust survivor; fitness buffs and smokers; teetotalers and those who tout a nightly martini.

But deep in their brains is where she's finding compelling hints that somehow, superagers are more resilient against the ravages of time.

Early on, brain scans showed that a superager's cortex—an outer brain layer critical for memory and other key functions—is much thicker than normal for their age. It looks more like the cortex of healthy 50- and 60-year-olds.

It's not clear if they were born that way. But Rogalski's team found another possible explanation: A superager's cortex doesn't shrink as fast. Over 18 months, average 80-somethings experienced more than twice the rate of loss.

Another clue: Deeper in the brain, that attention region is larger in superagers, too. And inside, autopsies showed that brain region was packed with unusual large, spindly neurons—a special and little understood type called von Economo neurons thought to play a role in social processing and awareness.

The superagers had four to five times more of those neurons than the typical octogenarian, Rogalski said—more even than the average young adult.

The Northwestern study isn't the only attempt at unraveling long-lasting memory. At the University of California, Irvine, Dr. Claudia Kawas studies the oldest-old, people 90 and above. Some have Alzheimer's. Some have maintained excellent memory and some are in between.

About 40 percent of the oldest-old who showed no symptoms of dementia in life nonetheless have full-fledged signs of Alzheimer's disease in their brains at death, Kawas told the AAAS meeting.

Rogalski also found varying amounts of amyloid and tau, hallmark Alzheimer's proteins, in the brains of some superagers.

Now scientists are exploring how these people deflect damage. Maybe superagers have different pathways to brain health.

"They are living long and living well," Rogalski said. "Are there modifiable things we can think about today, in our everyday lives" to do the same?

View the original article here

- Comments (0)

- Why Some People Cannot See Pictures in Their Mind

- By Jason von Stietz, M.A.

- February 9, 2018

-

Some people are able to clearly visualize objects in their “mind’s eye” while others are unable to visualize objects at all. The inability to picture something in your mind is called Aphantasia. Recently researcher from the University of New South Wales investigated this phenomenon. The findings were discussed in a recent article in Neuroscience News:

Mind blind

One of the creators of the Firefox internet browser, Blake Ross, realised his experience of visual imagery was vastly different from most people when he read about a man who lost his ability to imagine after surgery. In a Facebook post, Ross said:

“What do you mean ‘lost’ his ability? […] Shouldn’t we be amazed he ever had that ability?”

We’ve heard from many people who have experienced a similar epiphany to Ross. They too were astonished to discover that their complete lack of ability to picture visual imagery was different from the norm.

Visual imagery is involved in many everyday tasks, such as remembering the past, navigation and facial recognition, to name a few. Anecdotal reports from our aphantasic participants indicate that while they are able to remember things from their past, they don’t experience these memories in the same way as someone with strong imagery. They often describe them as a conceptual list of things that occurred rather than a movie reel playing in their mind.

As Ross describes it, he can ruminate on the “concept” of a beach. He knows there’s sand and water and other facts about beaches. But he can’t conjure up beaches he’s visited in his mind, nor does he have any capacity to create a mental image of a beach.

The idea some people are born wholly unable to imagine is not new. In the late 1800s, British scientist Sir Francis Galton conducted research asking colleagues and the general population to describe the quality of their internal imagery. These studies, however, relied on self-reports, which are subjective in nature. They depend on a person’s ability to assess their own mental processes – called introspection.

But how can I know that what you see in your mind is different to what I see? Perhaps we see the same thing but describe it differently. Perhaps we see different things but describe them the same.

Some researchers have suggested aphantasia may actually be a case of poor introspection; that aphantasics are in fact creating the same images in their mind as perhaps you and I, but it is their description of them that differs. Another idea is that aphantasics create internal images just like everyone else, but are not conscious of them. This means it’s not that their minds are blind, but they lack an internal consciousness of such images.

In a recent study we set out to investigate whether aphantasics are really “blind in the mind” or if they have difficulty introspecting reliably.

Binocular rivalryTo assess visual imagery objectively, without having to rely on someone’s ability to describe what they imagine, we used a technique known as binocular rivalry – where perception alternates between different images presented one to each eye. To induce this, participants wear 3D red-green glasses, where one eye sees a red image and the other eye a green one. When images are superimposed onto the glasses, we can’t see both images at once, so our brain is constantly switching from the green to the red image.

But we can influence which of the coloured images someone will see in the binocular rivalry display. One way is by getting them to imagine one of the two images beforehand. For example, if I asked you to imagine a green image, you will be more likely to see the green image once you’ve put on 3D glasses. And the stronger your imagery is the more frequently you will see the image you imagine.

We use how often a person sees the image they imagine as a measure of objective visual imagery. Because we’re not relying on the participant rating the vividness of the image in their mind, but on what they physically see in the binocular rivalry display, it removes the need for subjective introspection.

In our study, we asked self-described aphantasics to imagine either a red circle with horizontal lines or a green circle with vertical lines for six seconds before being presented with a binocular rivalry display while wearing the glasses. They then indicated which image they saw. They repeated this for close to 100 trials.

We found that when the aphantasics tried to form a mental image, their attempted imagined picture had no effect on what they saw in the binocular rivalry illusion. This suggests they don’t have a problem with introspection, but appear to have no visual imagery.

Why some people are mind blindResearch in the general population shows that visual imagery involves a network of brain activity spanning from the frontal cortex all the way to the visual areas at the back of the brain.

Current theories propose that when we imagine something, we try to reactivate the same pattern of activity in our brain as when we saw the image before. And the better we are able to do this, the stronger our visual imagery is. It might be that aphantasic individuals are not able to reactivate these traces enough to experience visual imagery, or that they use a completely different network when they try to complete tasks that involve visual imagery.

But there may be a silver lining to not being able to imagine visually. Overactive visual imagery is thought to play a role in addiction and cravings, as well as the development of anxiety disorders such as PTSD. It may be that the inability to visualise might anchor people in the present and allow them to live more fully in the moment.

Understanding why some people are unable to create these images in mind might allow us to increase their ability to imagine, and also possibly help us to tone down imagery in those for whom it has become overactive.

View the original article Here

- Comments (0)

- Exercise Recommended in Treatment of Mild Cognitive Impairment

- By Jason von Stietz, MA

- January 11, 2018

-

Getty Images According to the American Academy of Neurology, 6 percent of individuals in their 60’s suffer from mild cognitive impairment. Clinicians from the Mayo Clinic suggest that regular physical exercise totaling 150 minutes a week should be part of treatment to address symptoms related to mild cognitive impairment. This new guideline was discussed in recent article in Medical Xpress:

The recommendation is part of an updated guideline for mild cognitive impairment published in the Dec. 27 online issue of Neurology, the medical journal of the American Academy of Neurology.

"Regular physical exercise has long been shown to have heart health benefits, and now we can say exercise also may help improve memory for people with mild cognitive impairment," says Ronald Petersen, M.D., Ph.D., lead author, director of the Alzheimer's Disease Research Center, Mayo Clinic, and the Mayo Clinic Study of Aging. "What's good for your heart can be good for your brain." Dr. Petersen is the Cora Kanow Professor of Alzheimer's Disease Research.

Mild cognitive impairment is an intermediate stage between the expected cognitive decline of normal aging and the more serious decline of dementia. Symptoms can involve problems with memory, language, thinking and judgment that are greater than normal age-related changes.

Generally, these changes aren't severe enough to significantly interfere with day-to-day life and usual activities. However, mild cognitive impairment may increase the risk of later progressing to dementia caused by Alzheimer's disease or other neurological conditions. But some people with mild cognitive impairment never get worse, and a few eventually get better.

The academy's guideline authors developed the updated recommendations on mild cognitive impairment after reviewing all available studies. Six-month studies showed twice-weekly workouts may help people with mild cognitive impairment as part of an overall approach to managing their symptoms.

Dr. Petersen encourages people to do aerobic exercise: Walk briskly, jog, whatever you like to do, for 150 minutes a week—30 minutes, five times or 50 minutes, three times. The level of exertion should be enough to work up a bit of a sweat but doesn't need to be so rigorous that you can't hold a conversation. "Exercising might slow down the rate at which you would progress from mild cognitive impairment to dementia," he says.

Another guideline update says clinicians may recommend cognitive training for people with mild cognitive impairment. Cognitive training uses repetitive memory and reasoning exercises that may be computer-assisted or done in person individually or in small groups. There is weak evidence that cognitive training may improve measures of cognitive function, the guideline notes.

The guideline did not recommend dietary changes or medications. There are no drugs for mild cognitive impairment approved by the U.S. Food and Drug Administration.

More than 6 percent of people in their 60s have mild cognitive impairment across the globe, and the condition becomes more common with age, according to the American Academy of Neurology. More than 37 percent of people 85 and older have it.

With such prevalence, finding lifestyle factors that may slow down the rate of cognitive impairment can make a big difference to individuals and society, Dr. Petersen notes.

"We need not look at aging as a passive process; we can do something about the course of our aging," he says. "So if I'm destined to become cognitively impaired at age 72, I can exercise and push that back to 75 or 78. That's a big deal."

The guideline, endorsed by the Alzheimer's Association, updates a 2001 academy recommendation on mild cognitive impairment. Dr. Petersen was involved in the development of the first clinical trial for mild cognitive impairment and continues as a worldwide leader researching this stage of disease when symptoms possibly could be stopped or reversed.

View to the article here

- Comments (0)

- Callous Unemotional Traits Related to Brain Structure in Boys

- By Jason von Stietz, MA

- December 28, 2017

-

Photo Credit: Neuroscience News Researcher at the University of Basel have discovered a structure in the brains of boys, that when larger, is related to callous-unemotional traits. Utilizing magnetic resonance imaging, researchers found that the anterior insula, a structure in the brain related to emotion and empathy, is larger in boys. However, this relationship was not found in girls. The study was discussed in a recent article in Neuroscience News:

Callous-unemotional traits have been linked to deficits in development of the conscience and of empathy. Children and adolescents react less to negative stimuli; they often prefer risky activities and show less caution or fear. In recent years, researchers and doctors have given these personality traits increased attention, since they have been associated with the development of more serious and persistent antisocial behavior.

However, until now, most research in this area has focused on studying callous-unemotional traits in populations with a psychiatric diagnosis, especially conduct disorder. This meant that it was unclear whether associations between callous-unemotional traits and brain structure were only present in clinical populations with increased aggression, or whether the antisocial behavior and aggression explained the brain differences.

Using magnetic resonance imaging, the researchers were able to take a closer look at the brain development of typically-developing teenagers to find out whether callous-unemotional traits are linked to differences in brain structure. The researchers were particularly interested to find out if the relationship between callous-unemotional traits and brain structure differs between boys and girls.

Only boys show differences in brain structure

The findings show that in typically-developing boys, the volume of the anterior insula – a brain region implicated in recognizing emotions in others and empathy – is larger in those with higher levels of callous-unemotional traits. This variation in brain structure was only seen in boys, but not in girls with the same personality traits.

“Our findings demonstrate that callous-unemotional traits are related to differences in brain structure in typically-developing boys without a clinical diagnosis,” explains lead author Nora Maria Raschle from the University and the Psychiatric Hospital of the University of Basel in Switzerland. “In a next step, we want to find out what kind of trigger leads some of these children to develop mental health problems later in life while others never develop problems.”

Read the article Here

- Comments (0)

- Brain Implant Boosts Short-Term and Working Memory

- By Jason von Stietz, MA

- November 30, 2017

-

Researchers from University of Southern California have used a brain implant to help the brain’s of study participants function more effectively. Electrodes were implanted into the brain’s of participants. Once the pattern of activity associated with optimal memory performance was determined, the electrode was used to stimulate the brain reinforcing the optimal pattern. Findings indicated the short-term memory was improved by 15% and working memory was improved by 25%. The study was discussed in a recent article in Futurism:

With everyone from Elon Musk to MIT to the U.S. Department of Defense researching brain implants, it seems only a matter of time before such devices are ready to help humans extend their natural capabilities. Now, a professor from the University of Southern California (USC) has demonstrated the use of a brain implant to improve the human memory, and the device could have major implications for the treatment of one of the U.S.’s deadliest diseases.

Dong Song is a research associate professor of biomedical engineering at USC, and he recently presented his findings on a “memory prosthesis” during a meeting of the Society for Neuroscience in Washington D.C. According to a New Scientist report, the device is the first to effectively improve the human memory.

To test his device, Song’s team enlisted the help of 20 volunteers who were having brain electrodes implanted for the treatment of epilepsy.

Once implanted in the volunteers, Song’s device could collect data on their brain activity during tests designed to stimulate either short-term memory or working memory. The researchers then determined the pattern associated with optimal memory performance and used the device’s electrodes to stimulate the brain following that pattern during later tests.

Based on their research, such stimulation improved short-term memory by roughly 15 percent and working memory by about 25 percent. When the researchers stimulated the brain randomly, performance worsened.

As Song told New Scientist, “We are writing the neural code to enhance memory function. This has never been done before.”

A GROWING PROBLEM

While a better memory could be useful for students cramming for tests or those of us with trouble remembering names, it could be absolutely life-changing for people affected by dementia and Alzheimer’s.

As Bill Gates noted when announcing plans to invest $100 million of his own money into dementia and Alzheimer’s research, the disease is a multi-level problem that’s positioned to get even worse.

Age is the greatest risk factor for Alzheimer’s, according to the Alzheimer’s Association, with the vast majority of sufferers over the age of 65. With advances in medicine and healthcare continuously increasing how long we live, that segment of the population is growing dramatically, and by 2030, 20 percent of U.S. citizens are expected to be older than 65.

This increase in the number of potential dementia sufferers can be costly in both a financial and emotional sense. In 2016, the total cost of healthcare and long-term care for those suffering from dementia and Alzheimer’s disease was an estimated $236 billion, and according to the Alzheimer’s Association, the more severe a person’s cognitive impairment, the higher the rates of depression in their familial caregivers.

Of course, further testing is required before Song’s device could be approved as a treatment for dementia or Alzheimer’s, but if it is able to help those patients regain even part of their lost memory function, the impact would be felt not only by the patients themselves, but their families and even the economy at large.

Read the article Here

- Comments (0)

- Neural Signatures of Suicidal Thoughts Detected by fMRI

- By Jason von Stietz, MA

- November 26, 2017

-

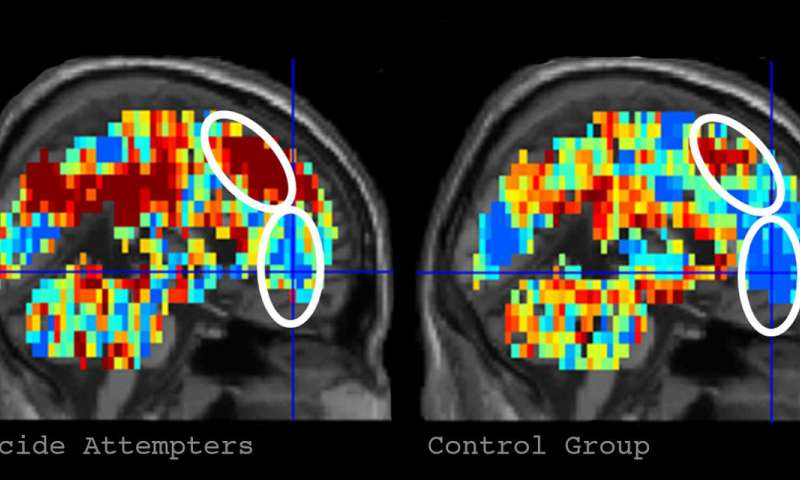

Credit: Carnegie Mellon University Can suicidal thoughts be detected by a machine? Researchers from Carnegie Mellon University among other institutions investigated the use of fMRI brain scans and machine-learning, the ability of a machine to learn without it being explicitly programmed, in the detection of neural signals related to suicidal thoughts. The researchers were able to identify individuals who had attempted suicide with 94% accuracy. The study was discussed in a recent article in MedicalXpress:

Suicidal risk is notoriously difficult to assess and predict, and suicide is the second-leading cause of death among young adults in the United States. Published in Nature Human Behaviour, the study offers a new approach to assessing psychiatric disorders.

"Our latest work is unique insofar as it identifies concept alterations that are associated with suicidal ideation and behavior, using machine-learning algorithms to assess the neural representation of specific concepts related to suicide. This gives us a window into the brain and mind, shedding light on how suicidal individuals think about suicide and emotion related concepts. What is central to this new study is that we can tell whether someone is considering suicide by the way that they are thinking about the death-related topics," said Just, the D.O. Hebb University Professor of Psychology in CMU's Dietrich College of Humanities and Social Sciences.

For the study, Just and Brent, who holds an endowed chair in suicide studies and is a professor of psychiatry, pediatrics, epidemiology and clinical and translational science at Pitt, presented a list of 10 death-related words, 10 words relating to positive concepts (e.g. carefree) and 10 words related to negative ideas (e.g. trouble) to two groups of 17 people with known suicidal tendencies and 17 neurotypical individuals.

They applied the machine-learning algorithm to six word-concepts that best discriminated between the two groups as the participants thought about each one while in the brain scanner. These were death, cruelty, trouble, carefree, good and praise. Based on the brain representations of these six concepts, their program was able to identify with 91 percent accuracy whether a participant was from the control or suicidal group.

Then, focusing on the suicidal ideators, they used a similar approach to see if the algorithm could identify participants who had made a previous suicide attempt from those who only thought about it. The program was able to accurately distinguish the nine who had attempted to take their lives with 94 percent accuracy.

"Further testing of this approach in a larger sample will determine its generality and its ability to predict future suicidal behavior, and could give clinicians in the future a way to identify, monitor and perhaps intervene with the altered and often distorted thinking that so often characterizes seriously suicidal individuals," said Brent.

To further understand what caused the suicidal and non-suicidal participants to have different brain activation patterns for specific thoughts, Just and Brent used an archive of neural signatures for emotions (particularly sadness, shame, anger and pride) to measure the amount of each emotion that was evoked in a participant's brain by each of the six discriminating concepts. The machine-learning program was able to accurately predict which group the participant belonged to with 85 percent accuracy based on the differences in the emotion signatures of the concepts.

"The benefit of this latter approach, sometimes called explainable artificial intelligence, is more revealing of what discriminates the two groups, namely the types of emotions that the discriminating words evoke," Just said. "People with suicidal thoughts experience different emotions when they think about some of the test concepts. For example, the concept of 'death' evoked more shame and more sadness in the group that thought about suicide. This extra bit of understanding may suggest an avenue to treatment that attempts to change the emotional response to certain concepts."

Just and Brent are hopeful that the findings from this basic cognitive neuroscience research can be used to save lives.

"The most immediate need is to apply these findings to a much larger sample and then use it to predict future suicide attempts," said Brent.

Just and his CMU colleague Tom Mitchell first pioneered this application of machine learning to brain imaging that identifies concepts from their brain activation signatures. Since then, the research has been extended to identify emotions and multi-concept thoughts from their neural signatures and also to uncover how complex scientific concepts are coded as they are being learned.

Read the article Here

- Comments (0)

- Chronic Alcohol Consumption Kills Brain Stem Cells

- By Jason von Stietz, MA

- November 19, 2017

-

Chronic alcohol abuse gone untreated can lead to severe brain damage. Researchers at The University of Texas Medical Branch at Galveston recently investigated the impact of alcohol consumption on stem cells in the brains of mice. Findings indicated that chronic alcohol consumption killed brain stem cells, reduced the production of new nerve cells, and affected females significantly worse than males. The study was discussed in a recent article in MedicalXpress:

Because the brain stems cells create new nerve cells and are important to maintaining normal cognitive function, this study possibly opens a door to combating chronic alcoholism.

The researchers also found that brain stem cells in key brain regions of adult mice respond differently to alcohol exposure, and they show for the first time that these changes are different for females and males. The findings are available in Stem Cell Reports.

Chronic alcohol abuse can cause severe brain damage and neurodegeneration. Scientists once believed that the number of nerve cells in the adult brain was fixed early in life and the best way to treat alcohol-induced brain damage was to protect the remaining nerve cells.

"The discovery that the adult brain produces stem cells that create new nerve cells provides a new way of approaching the problem of alcohol-related changes in the brain," said Dr. Ping Wu, UTMB professor in the department of neuroscience and cell biology. "However, before the new approaches can be developed, we need to understand how alcohol impacts the brain stem cells at different stages in their growth, in different brain regions and in the brains of both males and females."

In the study, Wu and her colleagues used a cutting-edge technique that allows them to tag brain stem cells and observe how they migrate and develop into specialized nerve cells over time to study the impact of long-term alcohol consumption on them.

Wu said that chronic alcohol drinking killed most brain stem cells and reduced the production and development of new nerve cells.

The researchers found that the effects of repeated alcohol consumption differed across brain regions. The brain region most susceptible to the effects of alcohol was one of two brain regions where new brain cells are created in adults.

They also noted that female mice showed more severe deficits than males. The females displayed more severe intoxication behaviors and more greatly reduced the pool of stem cells in the subventricular zone.

Using this model, scientists expect to learn more about how alcohol interacts with brain stem cells, which will ultimately lead to a clearer understanding of how best to treat and cure alcoholism.

Read the article Here

- Comments (0)

- Should Schizophrenia Be Reconceptualized?

- By Jason von Stietz, MA

- October 26, 2017

-

Getty Images People commonly view schizophrenia as a chronic and hopeless brain disease. However, researchers and clinicians are beginning to believe that this is not the case. Rather, some believe that what we now know as schizophrenia is the most severe end of the spectrum or of psychosis. They believe that what we call schizophrenia is actually many different diseases and disorders that we currently lump together. Sciencr discussed this new conceptualization in a recent article:

Today, having a diagnosis of schizophrenia is associated with a life-expectancy reduction of nearly two decades. By some criteria, only one in seven people recover. Despite heralded advances in treatments, staggeringly, the proportion of people who recover hasn’t increased over time. Something is profoundly wrong.

Part of the problem turns out to be the concept of schizophrenia itself.

Arguments that schizophrenia is a distinct disease have been “fatally undermined”. Just as we now have the concept of autism spectrum disorder, psychosis (typically characterised by distressing hallucinations, delusions, and confused thoughts) is also argued to exist along a continuum and in degrees. Schizophrenia is the severe end of a spectrum or continuum of experiences.

Jim van Os, a professor of psychiatry at Maastricht University, has argued that we cannot shift to this new way of thinking without changing our language. As such, he proposes the term schizophrenia “should be abolished”. In its place, he suggests the concept of a psychosis spectrum disorder.

Another problem is that schizophrenia is portrayed as a “hopeless chronic brain disease”. As a result, some people given this diagnosis, and some parents, have been told cancer would have been preferable, as it would be easier to cure. Yet this view of schizophrenia is only possible by excluding people who do have positive outcomes. For example, some who recover are effectively told that “it mustn’t have been schizophrenia after all”.

Schizophrenia, when understood as a discrete, hopeless and deteriorating brain disease, argues van Os, “does not exist”.

BREAKING DOWN BREAKDOWNS

Schizophrenia may instead turn out to be many different things. The eminent psychiatrist Sir Robin Murray describes how:

I expect to see the end of the concept of schizophrenia soon … the syndrome is already beginning to breakdown, for example, into those cases caused by copy number [genetic] variations, drug abuse, social adversity, etc. Presumably this process will accelerate, and the term schizophrenia will be confined to history, like “dropsy”.

Research is now exploring the different ways people may end up with many of the experiences deemed characteristic of schizophrenia: hallucinations, delusions, disorganised thinking and behaviour, apathy and flat emotion.

Indeed, one past error has been to mistake a path for the path or, more commonly, to mistake a back road for a motorway. For example, based on their work on the parasite Toxoplasma gondii, which is transmitted to humans via cats, researchers E. Fuller Torrey and Robert Yolken have argued that “the most important etiological agent [cause of schizophrenia] may turn out to be a contagious cat”. It will not.

Evidence does suggest that exposure to Toxoplasma gondii when young can increase the odds of someone being diagnosed with schizophrenia. However, the size of this effect involves less than a twofold increase in the odds of someone being diagnosed with schizophrenia. This is, at best, comparable to other risk factors, and probably much lower.

For example, suffering childhood adversity, using cannabis, and having childhood viral infections of the central nervous system, all increase the odds of someone being diagnosed with a psychotic disorder (such as schizophrenia) by around two to threefold. More nuanced analyses reveal much higher numbers.

Compared with non-cannabis users, the daily use of high-potency, skunk-like cannabis is associated with a fivefold increase in the odds of someone developing psychosis. Compared with someone who has not suffered trauma, those who have suffered five different types of trauma (including sexual and physical abuse) see their odds of developing psychosis increase more than fiftyfold.

Other routes to “schizophrenia” are also being identified. Around 1% of cases appear to stem from the deletion of a small stretch of DNA on chromosome 22, referred to as 22q11.2 deletion syndrome. It is also possible that a low single digit percentage of people with a schizophrenia diagnosis may have their experiences grounded in inflammation of the brain caused by autoimmune disorders, such as anti-NMDA receptor encephalitis, although this remains controversial.

All the factors above could lead to similar experiences, which we in our infancy have put into a bucket called schizophrenia. One person’s experiences may result from a brain disorder with a strong genetic basis, potentially driven by an exaggeration of the normal process of pruning connections between brain cells that happens during adolescence. Another person’s experiences may be due to a complex post-traumatic reaction. Such internal and external factors could also work in combination.

Either way, it turns out that the two extreme camps in the schizophrenia wars – those who view it as a genetically-based neurodevelopmental disorder and those who view it as a response to psychosocial factors, such as adversity – both had parts of the puzzle. The idea that schizophrenia was a single thing, reached by a single route, contributed to this conflict.

IMPLICATIONS FOR TREATMENT

Many medical conditions, such as diabetes and hypertension, can be reached by multiple routes that nevertheless impact the same biological pathways and respond to the same treatment. Schizophrenia could be like this. Indeed, it has been argued that the many different causes of schizophrenia discussed above may all have a common final effect: increased levels of dopamine.

If so, the debate about breaking schizophrenia down by factors that lead to it would be somewhat academic, as it would not guide treatment. However, there is emerging evidence that different routes to experiences currently deemed indicative of schizophrenia may need different treatments.

Preliminary evidence suggests that people with a history of childhood trauma who are diagnosed with schizophrenia are less likely to be helped by antipsychotic drugs. However, more research into this is needed and, of course, anyone taking antipsychotics should not stop taking them without medical advice. It has also been suggested that if some cases of schizophrenia are actually a form of autoimmune encephalitis, then the most effective treatment could be immunotherapy (such as corticosteroids) and plasma exchange (washing of the blood).

Yet the emerging picture here is unclear. Some new interventions, such as the family-therapy based Open Dialogue approach, show promise for a wide range of people with schizophrenia diagnoses. Both general interventions and specific ones, tailored to someone’s personal route to the experiences associated with schizophrenia, may be needed. This makes it critical to test for and ask people about all potentially relevant causes. This includes childhood abuse, which is still not being routinely asked about and identified.

The potential for different treatments to work for different people further explains the schizophrenia wars. The psychiatrist, patient or family who see dramatic beneficial effects of antipsychotic drugs naturally evangelically advocate for this approach. The psychiatrist, patient or family who see drugs not working, but alternative approaches appearing to help, laud these. Each group sees the other as denying an approach that they have experienced to work. Such passionate advocacy is to be applauded, up to the point where people are denied an approach that may work for them.

WHAT COMES NEXT?

None of this is to say the concept of schizophrenia has no use. Many psychiatrists still see it as a useful clinical syndrome that helps define a group of people with clear health needs. Here it is viewed as defining a biology that is not yet understood but which shares a common and substantial genetic basis across many patients.

Some people who receive a diagnosis of schizophrenia will find it helpful. It can help them access treatment. It can enhance support from family and friends. It can give a name to the problems they have. It can indicate they are experiencing an illness and not a personal failing. Of course, many do not find this diagnosis helpful. We need to retain the benefits and discard the negatives of the term schizophrenia, as we move into a post-schizophrenia era.

What this will look like is unclear. Japan recently renamed schizophrenia as “integration disorder”. We have seen the idea of a new “psychosis spectrum disorder”. However, historically, the classification of diseases in psychiatry has been argued to be the outcome of a struggle in which “the most famous and articulate professor won”. The future must be based on evidence and a conversation which includes the perspectives of people who suffer – and cope well with – these experiences.

Whatever emerges from the ashes of schizophrenia, it must provide better ways to help those struggling with very real experiences.

Read the article Here

- Comments (0)

- Bilateral Communication Increases in Older Adult Brain

- By Jason von Stietz, M.A.

- September 29, 2017

-

As the human brain ages, how does it manage to continue doing what we need it to do? A recent study found that the brain of an older adult compensates by increasing bilateral communication during a task. The study was discussed in a recent article in MedicalXpress:

The aged brain tends to show more bilateral communication than the young brain. While this finding has been observed many times, it has not been clear whether this phenomena is helpful or harmful and no study has directly manipulated this effect, until now.

"This study provides an explicit test of some controversial ideas about how the brain reorganizes as we age," said lead author Simon Davis, PhD. "These results suggest that the aging brain maintains healthy cognitive function by increasing bilateral communication."

Simon Davis and colleagues used a brain stimulation technique known as transcranial magnetic stimulation (TMS) to modulate brain activity of healthy older adults while they performed a memory task. When researchers applied TMS at a frequency that depressed activity in one memory region in the left hemisphere, communication increased with the same region in the right hemisphere, suggesting the right hemisphere was compensating to help with the task.

In contrast, when the same prefrontal site was excited, communication was increased only in the local network of regions in the left hemisphere. This suggested that communication between the hemispheres is a deliberate process that occurs on an "as needed" basis.

Furthermore, when the authors examined the white matter pathways between these bilateral regions, participants with stronger white matter fibers connecting left and right hemispheres demonstrated greater bilateral communication, strong evidence that structural neuroplasticity keeps the brain working efficiently in later life.

"Good roads make for efficient travel, and the brain is no different. By taking advantage of available pathways, aging brains may find an alternate route to complete the neural computations necessary for functioning," said Davis.

These results suggest that greater bilaterality in the prefrontal cortex might be the result of the aging brain adapting to the damage endured over the lifespan, in an effort to maintain normal function. Future brain-stimulation techniques could target this bilateral effect in effort to promote communication between the hemispheres and, hopefully, engender healthy cognition throughout the lifespan.

Read the article Here

- Comments (0)

Subscribe to our Feed via RSS

Subscribe to our Feed via RSS